超大数据分页之Twitter的cursor方式进行Web数据分页

发布时间:01/15 来源: 浏览:

关键词:

文章介绍了关于Twitter的cursor方式进行Web数据分页用法,有需要了解大数据分页的朋友可参考一下。

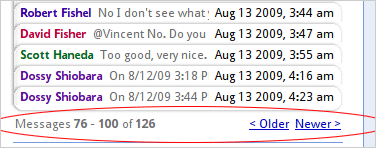

上图功能的技术实现方法拿MySQL来举例就是

select * from msgs where thread_id = ? limit page * count, count

不过在看Twitter API的时候,我们却发现不少接口使用cursor的方法,而不用page, count这样直观的形式,如 followers ids 接口

| 代码如下 | |

|

URL:

http://twitter.com/followers/ids.format

Returns an array of numeric IDs for every user following the specified user.

Parameters:

* cursor. Required. Breaks the results into pages. Provide a value of -1 to begin paging. Provide values as returned to in the response body’s next_cursor and previous_cursor attributes to page back and forth in the list. o Example: http://twitter.com/followers/ids/barackobama.xml?cursor=-1 o Example: http://twitter.com/followers/ids/barackobama.xml?cursor=-1300794057949944903 |

|

http://twitter.com/followers/ids.format

从上面描述可以看到,http://twitter.com/followers/ids.xml 这个调用需要传cursor参数来进行分页,而不是传统的 url?page=n&count=n的形式。这样做有什么优点呢?是否让每个cursor保持一个当时数据集的镜像?防止由于结果集实时改变而产生查询结果有重复内容?

在Google Groups这篇Cursor Expiration讨论中Twitter的架构师John Kalucki提到

在Google Groups这篇Cursor Expiration讨论中Twitter的架构师John Kalucki提到

| 代码如下 | |

|

A cursor is an opaque deletion-tolerant index into a Btree keyed by source

userid and modification time. It brings you to a point in time in the reverse chron sorted list. So, since you can’t change the past, other than erasing it, it’s effectively stable. (Modifications bubble to the top.) But you have to deal with additions at the list head and also block shrinkage due to deletions, so your blocks begin to overlap quite a bit as the data ages. (If you cache cursors and read much later, you’ll see the first few rows of cursor[n+1]’s block as duplicates of the last rows of cursor[n]’s block. The intersection cardinality is equal to the number of deletions in cursor[n]’s block). Still, there may be value in caching these cursors and then heuristically rebalancing them when the overlap proportion crosses some threshold. |

|

在另外一篇new cursor-based pagination not multithread-friendly中John又提到

| 代码如下 | |

|

The page based approach does not scale with large sets. We can no

longer support this kind of API without throwing a painful number of 503s. Working with row-counts forces the data store to recount rows in an O

(n^2) manner. Cursors avoid this issue by allowing practically constant time access to the next block. The cost becomes O(n/ block_size) which, yes, is O(n), but a graceful one given n < 10^7 and a block_size of 5000. The cursor approach provides a more complete and consistent result set. Proportionally, very few users require multiple page fetches with a

page size of 5,000. Also, scraping the social graph repeatedly at high speed is could

often be considered a low-value, borderline abusive use of the social graph API. |

|

通过这两段文字我们已经很清楚了,对于大结果集的数据,使用cursor方式的目的主要是为了极大地提高性能。还是拿MySQL为例说明,比如翻页到100,000条时,不用cursor,对应的SQL为

select * from msgs limit 100000, 100

在一个百万记录的表上,第一次执行这条SQL需要5秒以上。

假定我们使用表的主键的值作为cursor_id, 使用cursor分页方式对应的SQL可以优化为

假定我们使用表的主键的值作为cursor_id, 使用cursor分页方式对应的SQL可以优化为

select * from msgs where id > cursor_id limit 100;